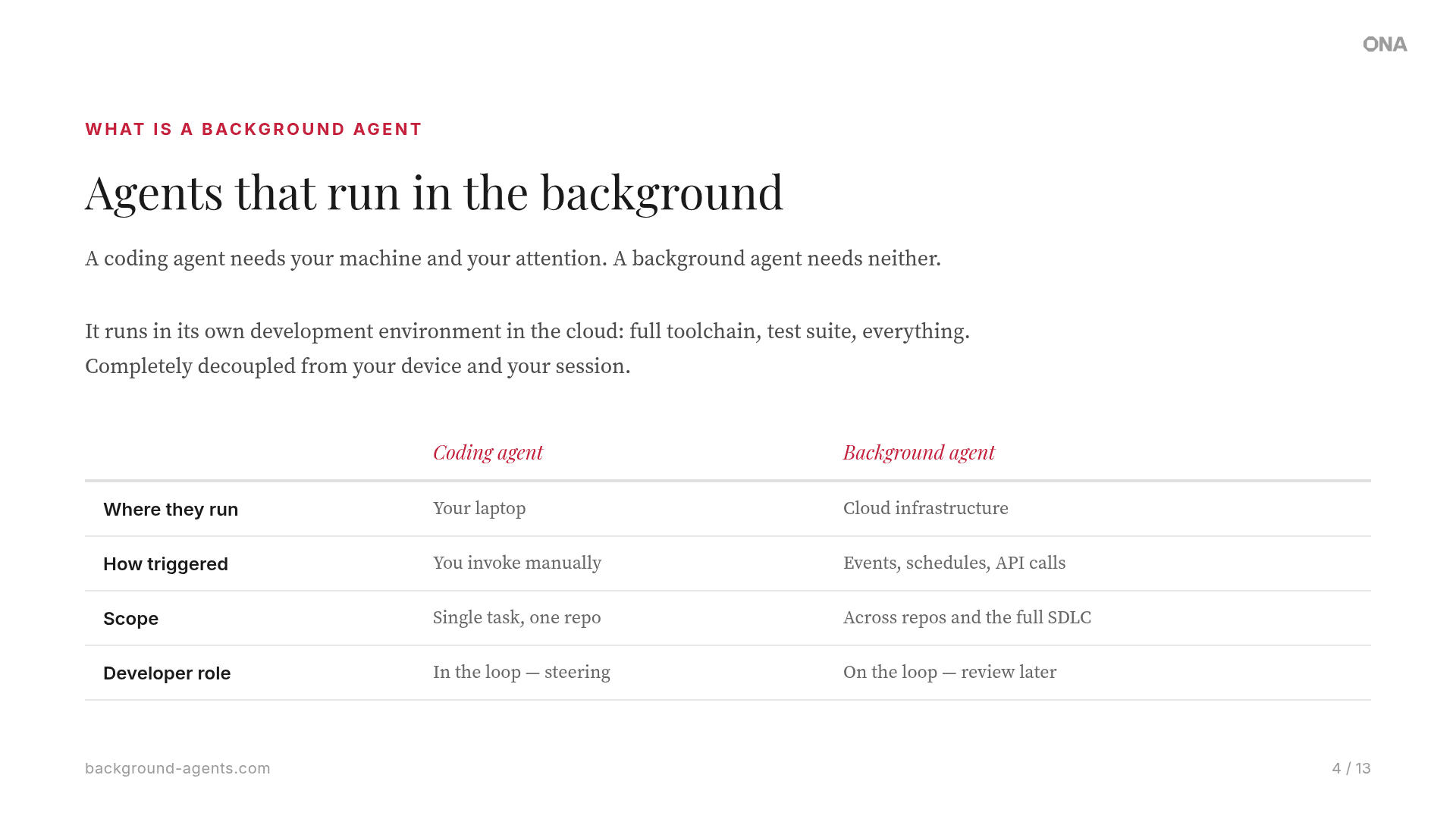

What is a background agent?

A coding agent needs your machine and your attention. A background agent needs neither.

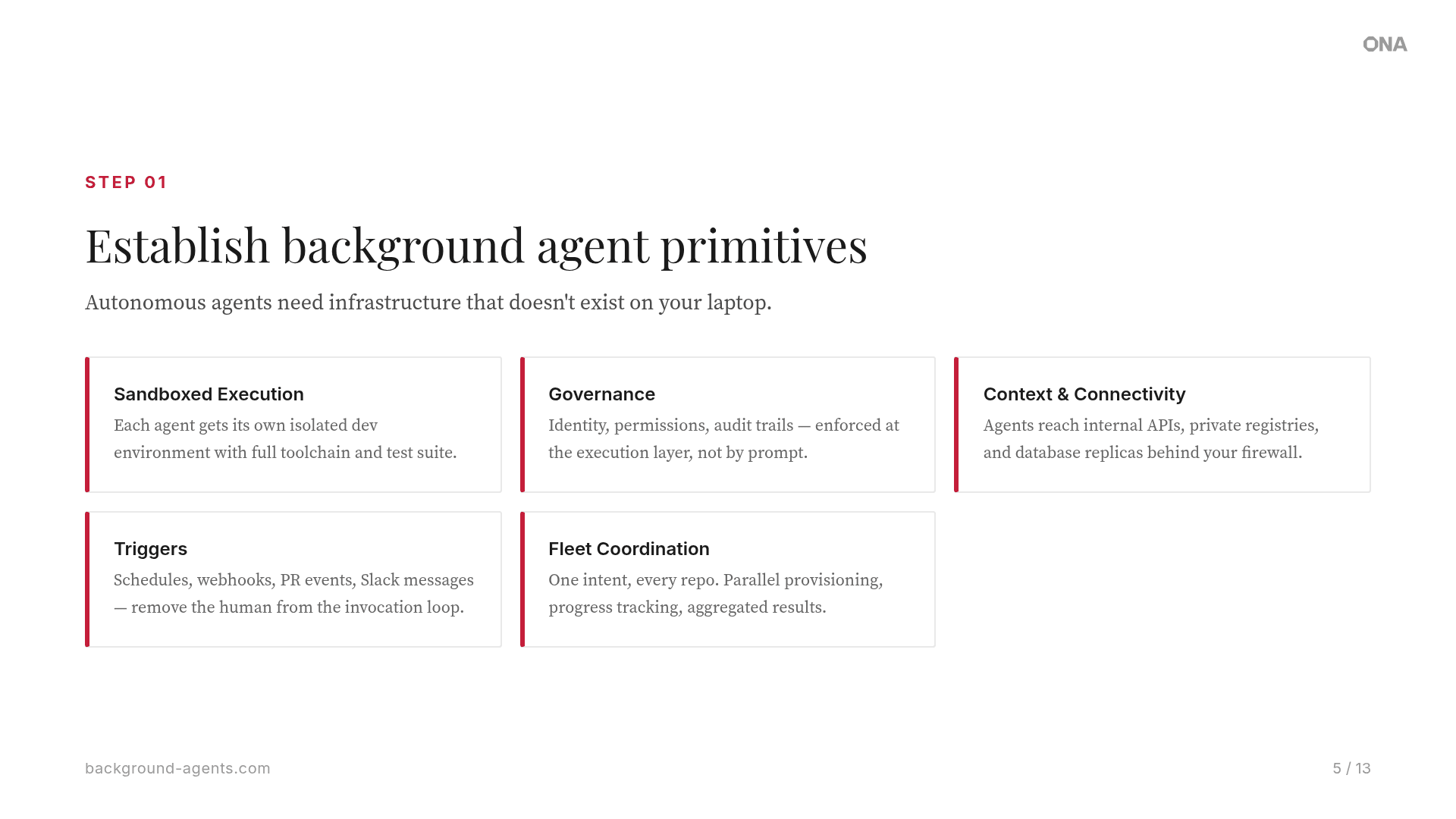

Background agents run in their own development environment in the cloud, with a full toolchain, test suite, and access to internal systems, completely decoupled from your device. Kick them off from your laptop and check the results from your phone. Trigger them from a PR, a Slack thread, a Linear ticket, or a webhook.

Background agents change the engineering operating model. Instead of humans initiating every task, agents respond to events autonomously. When a CVE is published, agents scan your repos and open a PR. When a dependency is flagged, agents generate patches.

The destination? A software factory, where fleets of agents transform the entire SDLC.