Prerequisites

Before starting, ensure you have:- GCP Project with billing enabled, sufficient quotas, and required GCP APIs enabled

-

VPC and subnet: a custom VPC with a runner subnet. The runner subnet hosts both the runner service and environment VMs. Internal load balancers require additional subnets.

Optional: Private Google Access. If your runner subnet does not have external internet access, enable Private Google Access on the subnet so VMs can reach GCP services through Google’s internal network. See GCP services and APIs required for the full list of services that need to be reachable.

- Domain name that you control with DNS modification capabilities

-

SSL/TLS certificate with Subject Alternative Names (SANs) for both the root domain and wildcard:

yourdomain.comand*.yourdomain.com. Storage location depends on your load balancer mode. - Terraform >= 1.3 and gcloud CLI installed and authenticated

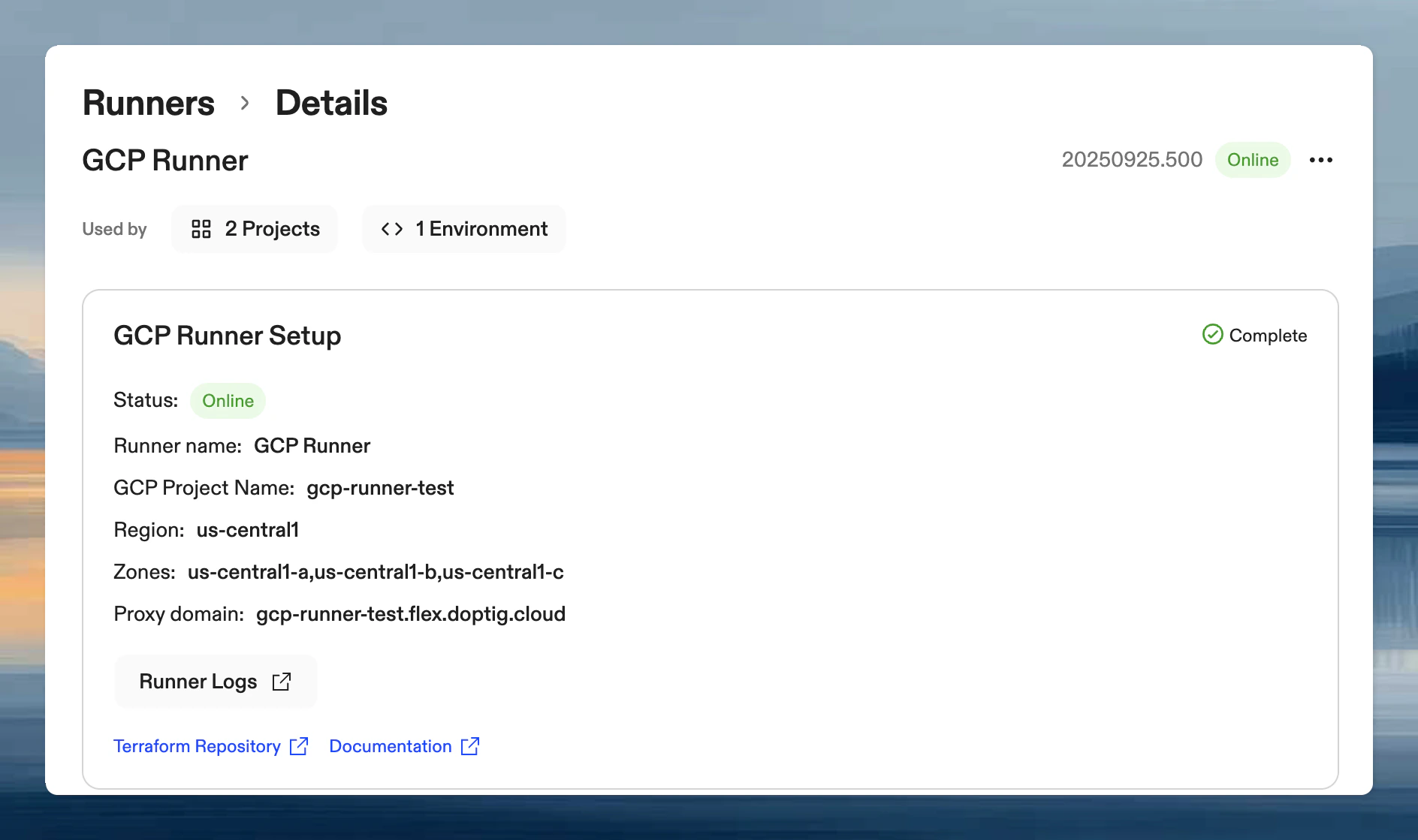

Create runner in Ona

- In the Ona dashboard, go to Settings → Runners and click Set up a new runner

- Select Google Cloud Platform as the provider

- Enter a name and click Create

Terraform module setup

Create a directory for your runner configuration and set up the following files.main.tf references the Ona Terraform module with a GCS backend for state storage:

Using a GCS backend stores your Terraform state remotely, enabling team collaboration and protecting against local state loss. Create the bucket beforehand with versioning enabled.

variables.tf declares input variables. Add optional variables from the advanced configuration section as needed:

terraform.tfvars contains the values from the Ona dashboard and your GCP project. See the sections below for the full variable reference.

Required configuration variables

These variables are required for every GCP Runner deployment. Most of them are pre-filled in the Terraform configuration example shown in the Ona dashboard after you create the runner.Core Authentication Variables

These values are provided by the Ona dashboard when you create the runner.| Variable | Description | Example Value | Required |

|---|---|---|---|

api_endpoint | Ona management plane API endpoint (from Ona dashboard) | "https://app.gitpod.io/api" | ✅ Yes |

runner_id | Unique identifier for your runner (from Ona dashboard) | "runner-abc123def456" | ✅ Yes |

runner_token | Authentication token for the runner (from Ona dashboard) | "eyJhbGciOiJSUzI1NiIs..." | ✅ Yes |

runner_name | Display name for your runner | "my-company-gcp-runner" | ✅ Yes |

runner_domain | Domain name for accessing development environments | "dev.yourcompany.com" | ✅ Yes |

The

api_endpoint value is provided in the Ona dashboard when you create the runner. If your organization uses a custom domain, the endpoint will reflect that domain instead.GCP Project and Location

Specify the GCP project and region where the runner infrastructure will be created.| Variable | Description | Example Value | Required |

|---|---|---|---|

project_id | Your GCP project ID | "your-gcp-project-123" | ✅ Yes |

region | GCP region for deployment | "us-central1" | ✅ Yes |

zones | List of availability zones (2-3 recommended for HA) | ["us-central1-a", "us-central1-b"] | ✅ Yes |

Network and Ingress Configuration

Configure how inbound and outbound traffic reaches your runner by specifying your VPC, subnet, and load balancer settings.| Variable | Description | Example Value | Required |

|---|---|---|---|

vpc_name | Name of your existing VPC | "your-company-vpc" | ✅ Yes |

runner_subnet_name | Subnet where runner and environments will be deployed | "dev-environments-subnet" | ✅ Yes |

/16 for non-routable ranges (large deployments) or /24 minimum for routable ranges.

External Load Balancer (Default)

Internal Load Balancer

certificate_secret_id must point to a Google Secret Manager secret containing both the certificate and private key as a JSON object:

gcloud, replacing the paths with your certificate and key files:

Advanced configuration

The settings below are for organizations with additional network or security requirements, such as corporate proxies, private CAs, encryption key management, or restricted IAM policies. Most deployments do not need these.HTTP Proxy Configuration

For environments behind corporate firewalls:| Variable | Description | Example Value |

|---|---|---|

proxy_config.http_proxy | HTTP proxy server URL | "http://proxy.company.com:8080" |

proxy_config.https_proxy | HTTPS proxy server URL | "https://proxy.company.com:8080" |

proxy_config.no_proxy | Comma-separated list of hosts to bypass proxy | ".company.com,localhost,127.0.0.1" |

proxy_config.all_proxy | All-protocol proxy server URL | "http://proxy.company.com:8080" |

Custom CA Certificate

If your network uses a corporate proxy or internal services with certificates signed by a private Certificate Authority, configure the runner to trust your CA certificate:| Variable | Description | Example Value |

|---|---|---|

ca_certificate.file_path | Path to a CA certificate file | "./certs/corporate-ca.pem" |

ca_certificate.content | CA certificate content (PEM-encoded string) | "-----BEGIN CERTIFICATE-----\n..." |

file_path or content, not both.

This is commonly needed alongside HTTP Proxy Configuration when the proxy performs TLS inspection with a corporate CA.

Customer-Managed Encryption Keys (CMEK)

For compliance with organizational encryption policies:| Variable | Description | Default Value |

|---|---|---|

create_cmek | Automatically create KMS keyring and key | false |

kms_key_name | Existing KMS key (when create_cmek = false) | null |

For additional configurations when using pre-existing CMEK keys, refer to the IAM configuration guide in the Terraform module.

Pre-Created Service Accounts

By default, the Terraform module creates and manages three service accounts for the runner components:| Service Account | Purpose |

|---|---|

| runner | Runner orchestrator. Manages environment lifecycle, compute instances, networking, secrets, build cache, and event processing |

| environment_vm | Environment VMs. Reads container images, writes logs and metrics |

| proxy_vm | Proxy VMs. Routes traffic to environments, reads secrets and container images |

You can provide a subset. Any service account left empty will be created and managed by the module automatically. The Terraform deployer account will also need fewer IAM permissions when using pre-created service accounts, since it no longer needs

iam.serviceAccounts.create or resourcemanager.projects.setIamPolicy for those accounts.Custom Images

Some enterprise networks do not allow pulling container images from external registries. In these cases, you can point the runner at images hosted in your own internal registry.| Variable | Description | Example Value |

|---|---|---|

custom_images.runner_image | Custom runner container image | "gcr.io/your-project/runner:v1.0" |

custom_images.proxy_image | Custom proxy container image | "gcr.io/your-project/proxy:v1.0" |

custom_images.prometheus_image | Custom Prometheus image | "gcr.io/your-project/prometheus:latest" |

custom_images.docker_config_json | Docker registry credentials (JSON) | jsonencode({...}) |

If you must use custom images, set up an automated pipeline to sync images from Ona’s stable channel to your internal registry (e.g., Artifactory). Contact Ona support for guidance on image synchronization and release notifications.

Shared VPC

If your organization uses a GCP Shared VPC where the VPC is hosted in a separate host project, setvpc_project_id to the project that owns the VPC. When omitted, the module assumes the VPC is in the same project as the runner (project_id).

| Variable | Description | Example Value |

|---|---|---|

vpc_project_id | Project ID where the Shared VPC is located | "shared-vpc-host-project" |

Project Metadata Mode

By default the module usesgoogle_compute_project_metadata, which is authoritative and removes any project metadata keys not declared in the module. Set use_authoritative_project_metadata = false to use per-key google_compute_project_metadata_item resources instead, which only manage enable-oslogin and gitpod-runner-id.

Migrating an existing deployment

Migrating an existing deployment

Before applying with Replace

use_authoritative_project_metadata = false on an existing deployment, migrate the Terraform state:<PROJECT_ID> with your GCP project ID. Adjust the module path if yours differs from ona_runner.Labels

Apply GCP labels to all resources created by the module. Labels are useful for cost attribution, filtering in billing reports, and organizational policies.Deploy

Initialize Terraform to download the module, then validate, plan, and apply:Post-deployment

Afterterraform apply completes, retrieve the load balancer IP and configure DNS:

Next steps

With your GCP Runner successfully deployed and verified:- Configuring Repository Access - Set up access to your Git repositories and authentication

- Private GAR Images - Configure access to private Google Artifact Registry

- Google Vertex AI - Use Vertex AI as the LLM provider for Ona Agent on this runner

- Troubleshooting Guide - Troubleshooting and monitoring guide